Introduction

We had an issue where Windows Autopilot enrollments were hanging (and later failing) in the Account Setup phase.This problem occurred when the device should be applying a certificate targeted to the user via SCEP/NDES, but it was failing. The troubleshooting below, should hopefully help you in a similar situtation.

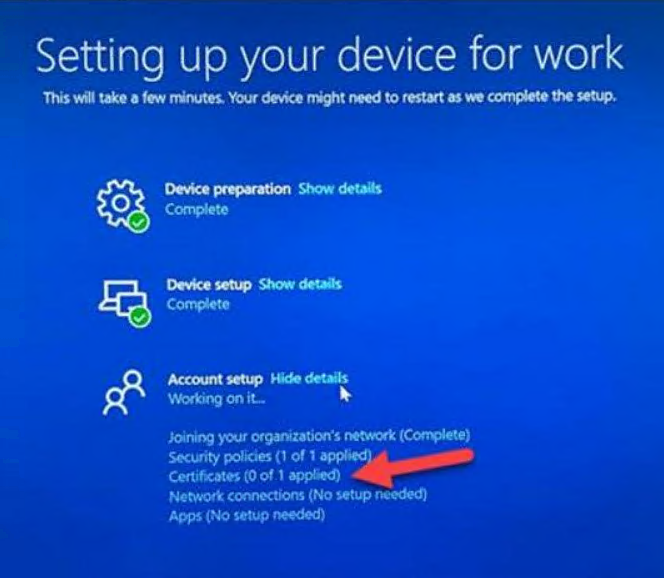

We saw the following occur during the Account Setup phase of the ESP. On the Certificates step, it would sit there for the configured timeout (70 minutes in our case) before failing.

Certificates (0 of 1 applied)

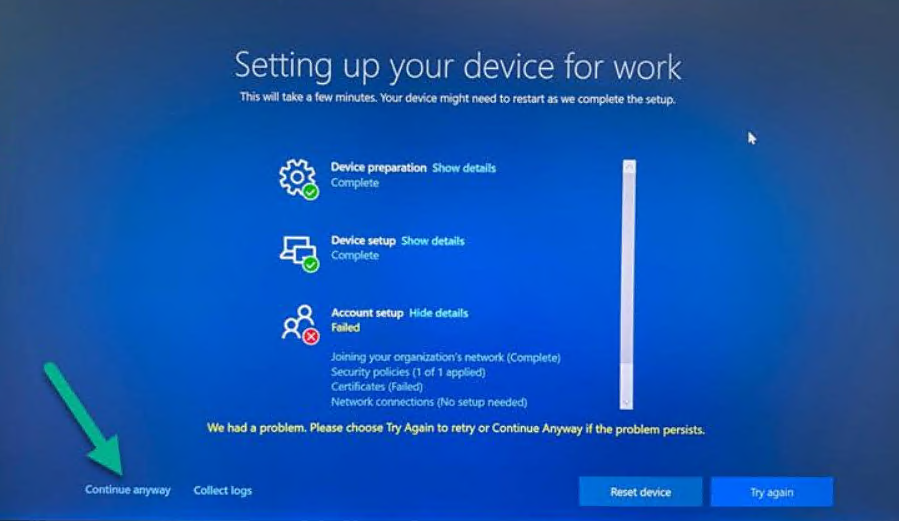

After the Windows Autopilot enrollment configured timeout ran, a failure message appeared.

Clicking Continue Anyway at least allowed the enrollment to complete with a mostly functional desktop.

Some items that required this SCEP certificate included the following

- Enterprise Wi-Fi

- Remote Desktop Protocol (RDP)

- Accessing shares and other on-premises resources

Troubleshooting

Note: Not all SCEP failures produce the same errors, however this troubleshooting flow should hopefully be enough to point you in the direction of the failure.

Review event viewer logs on an affected client

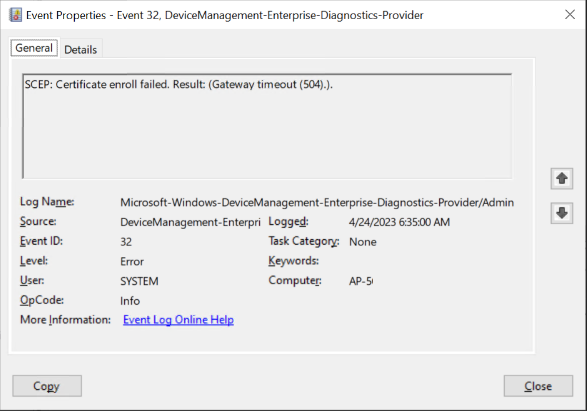

To troubleshoot this we started with the event viewer on a device that failed enrollment where we chose Continue Anyway.

Looking at the DeviceManagement-Enterprise-Diagnostics-Provider log in Applications and Services Logs/Microsoft/Windows.

That led to the discovery of two main errors which repeated over and over, namely

Event Id 32

SCEP: Certificate enroll failed. Result: (Gateway timeout (504).).

and

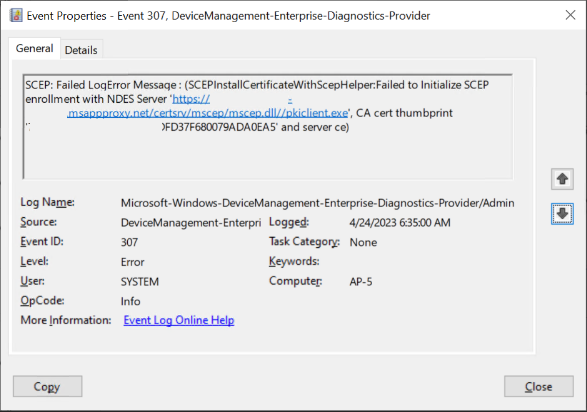

Event Id 307

SCEP: Failed LogError Message : (SCEPInstallCertificateWithScepHelper:Failed to Initialize SCEP enrollment with NDES Server ‘https://scepintunendes-company.msappproxy.net/certsrv/mscep/mscep.dll//pkiclient.exe’, CA cert thumbprint ‘…501D0CA.,,,,F680079ADA0EA5’ and server ce)

These errors repeated themselves over the time period of the configured timeout (and after).

Using the URL revealed in event id 307, we could attempt to reach the same SCEP server using a web-browser, both on an internal client computer and an external (non-proxied) client.

https://scepintunendes-company.msappproxy.net/certsrv/mscep/mscep.dll/

The results were the same. It generated a couple of Gateway Timeout errors, shown below:

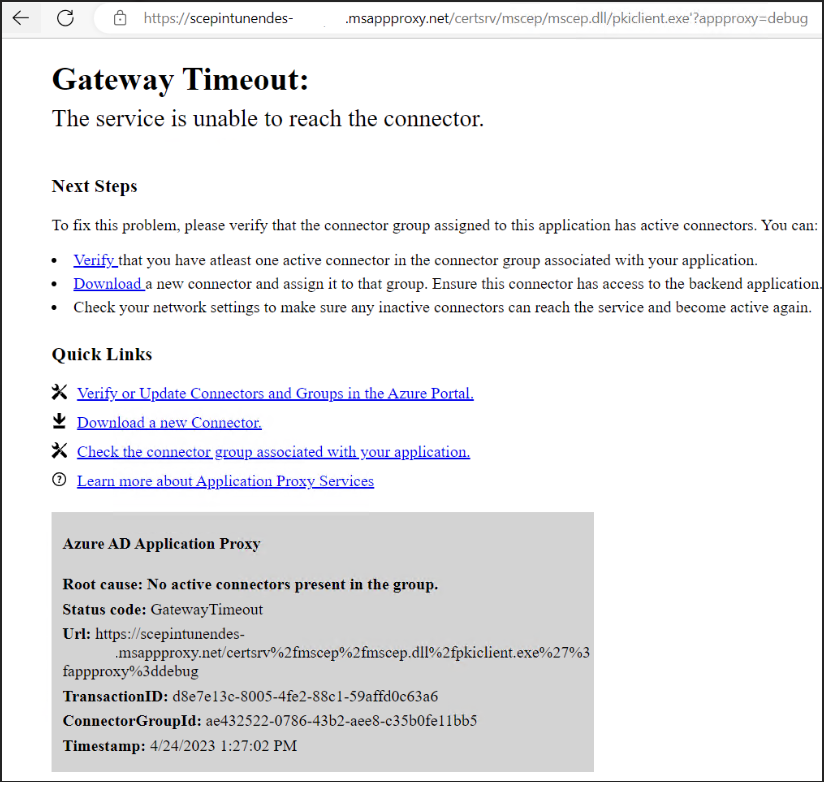

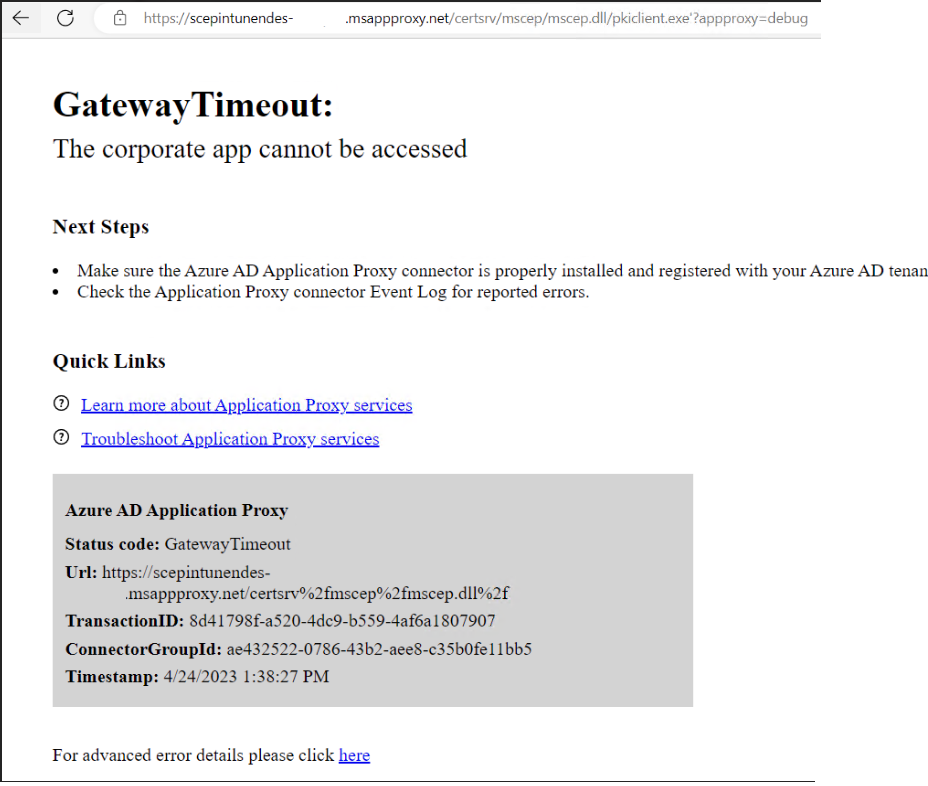

Gateway Timeout:

The service is unable to reach the connector

and if specifying the full url from the event viewer including pkiclient.exe,

Gateway Timeout:

The corporate app cannot be accessed

Based on these result both internally and externally, we concluded that this error was coming from Azure and not related to our internal Firewall or Proxy solutions.

The hint as to what was causing the problem was in the Next steps.

- Make sure the Azure AD Application Proxy connector is properly installed and registered with your Azure AD tenant.

- Check the Application Proxy connector Event Log for reported errors

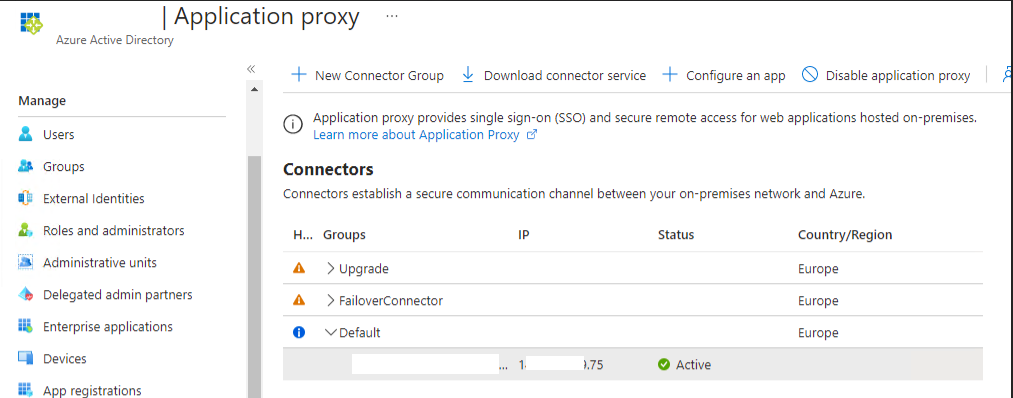

A quick look at the Application Proxy in Azure, revealed that it was Active.

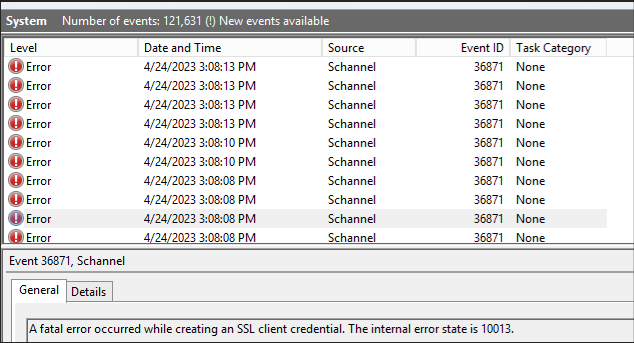

However, the event log (obfuscated) of the on-premises server listed in the Application Proxy, told a different story. Loads and loads of event id 36871.

A fatal error occurred while creating an SSL client credential. The internal error state is 10013.

That event id led us to the following docs.

- https://learn.microsoft.com/en-us/answers/questions/345906/a-fatal-error-occurred-while-creating-an-ssl-clien

- https://learn.microsoft.com/en-us/answers/questions/728944/a-fatal-error-occurred-while-creating-a-tls-client?page=2#answers

However neither of these helped and just delayed us further.

We did however eventually figure out the issue. It was down to a self signed certificate on the Application Proxy, which had expired.

To summarize, the issue was nothing to do with the certificates themselves, or the NDES server, instead it was down to the Azure Application Proxy server itself, which is used to proxy requests from Jamf & Intune to the Azure AD Application Proxy Connector. To solve it, we had to renew the certificate by re-registering the proxy connector with Azure AD.

Thank you